Date

24/February/2026

Share

- Why BFSI Databases Are Expensive and Complex

- The Role of Caching: Reducing Load Without Compromising Accuracy

- Multi-Region Replication: From DR to Business Continuity

- Migration Blueprint for High-Volume BFSI Systems

- Observability: The Missing Layer in Many BFSI Clouds

- How Ascertain Approaches Database Optimisation for BFSI

- Conclusion

Database Workload Optimization for BFSI

Improving performance, reducing cost, and building resilience at scale

In banking and financial services, databases are rarely just databases.

They are ledgers, audit trails, risk engines, compliance records, and often the single point of failure for the entire business.

Across ASEAN, the Middle East, and South Asia, many BFSI organisations share a similar reality:

- Digital channels are growing faster than expected

- Transaction volumes spike unpredictably

- Regulatory scrutiny is increasing

- Infrastructure costs keep rising

- Yet performance issues persist

At the centre of these challenges sits one familiar culprit: poorly optimised database workloads.

Cloud adoption has helped, but only partially. Many institutions have moved databases to the cloud without modernising how they are designed, operated, and observed.

This is where database workload optimisation, guided by AWS best practices, becomes a strategic advantage rather than a technical exercise.

Why BFSI Databases Are Expensive and Complex

From an architectural standpoint, BFSI databases carry unique burdens:

- Always-on expectations

Payments, FPX transfers, e-wallets, and settlement systems operate in real time. Downtime, even minutes can trigger customer complaints, regulatory flags, and revenue loss.

- Mixed workload patterns

A single platform often handles:

- High-volume OLTP transactions

- Read-heavy reporting

- End-of-day reconciliation

- Regulatory queries

- Fraud analytics

Treating all of these with a single database configuration lead to inefficiency.

- Overprovisioning “just in case”

To avoid outages, many teams overprovision compute and storage, driving costs up while utilisation stays low.

- Limited observability

Without deep visibility, teams react after performance degrades instead of optimising proactively.

AWS studies consistently show that 30–40% of cloud spend in BFSI environments is tied to inefficient database usage, not actual business demand.

In Ascertain-led architectures including platforms like RinggitPay, Aurora forms the core transactional backbone, ensuring strong consistency while scaling read traffic through replicas.

The Role of Caching: Reducing Load Without Compromising Accuracy

Not every request needs to hit the primary database.

Strategic caching using Amazon ElastiCache allows BFSI platforms to:

- Reduce database pressure

- Improve response times

- Lower compute costs

Common BFSI use cases include:

- Customer profile lookups

- Session and token validation

- Configuration and limit checks

- Reference data

In optimised AWS environments, caching alone can:

- Reduce database read load by 40–60%

- Improve response times by 10–20×

Ascertain typically implements caching as a supporting layer, never replacing systems of record aligning with AWS Well-Architected reliability and performance pillars.

Multi-Region Replication: From DR to Business Continuity

Traditional DR models assumed downtime was acceptable. Modern BFSI regulators disagree.

AWS Aurora Global Database enables:

- Cross-region replication with sub-second lag

- Read scalability across geographies

- Faster regional failover

This supports:

- Regulatory resilience requirements

- Cross-border payment operations

- Regional data access with central control

Ascertain’s cloud architectures increasingly adopt multi-region-aware designs, especially for payment ecosystems operating across Southeast Asia and the GCC.

Migration Blueprint for High-Volume BFSI Systems

Successful database optimisation is rarely a “big bang” migration.

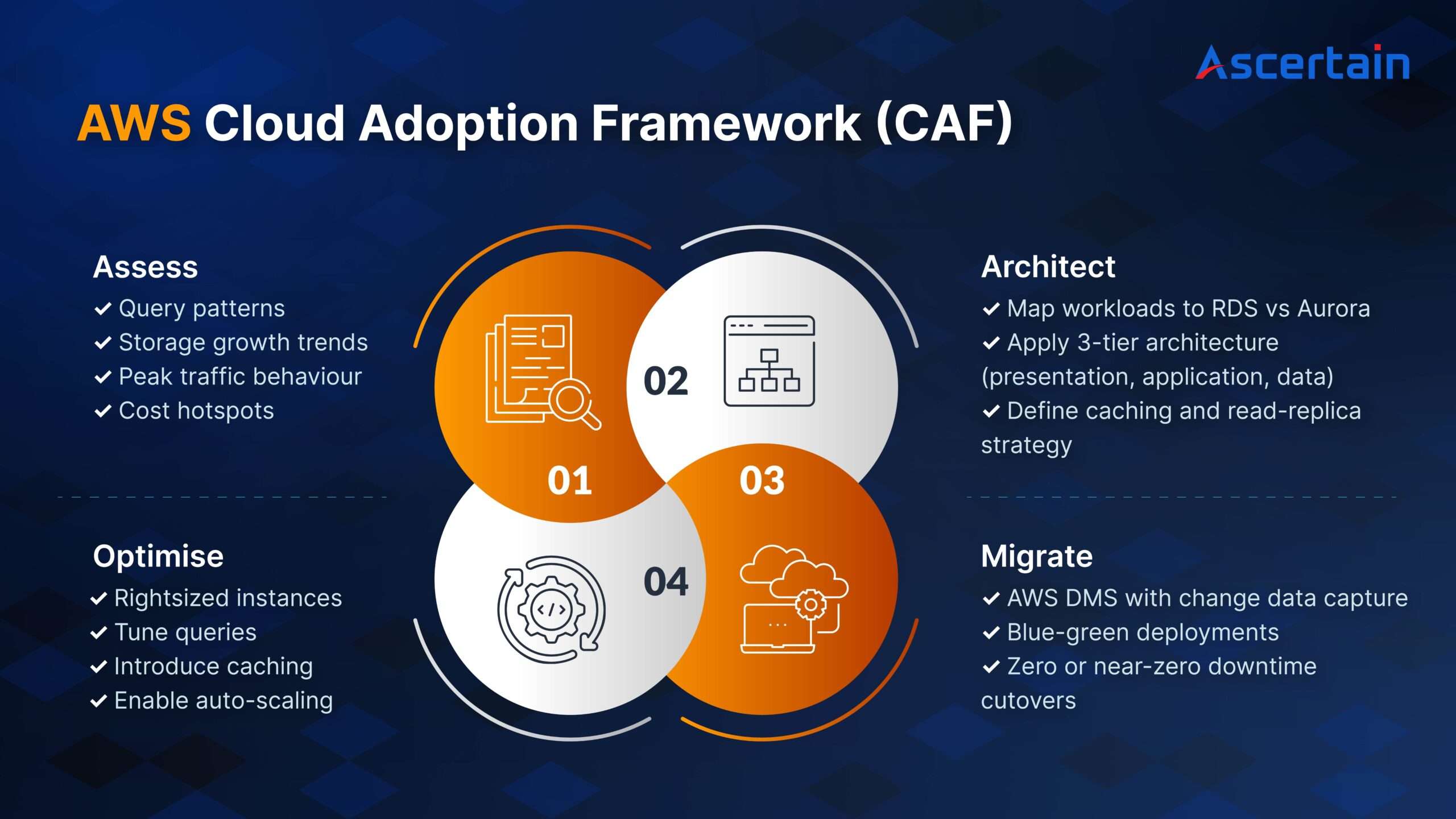

Ascertain follows a phased blueprint aligned with the AWS Cloud Adoption Framework (CAF):

Observability: The Missing Layer in Many BFSI Clouds

Migrating to the cloud without observability is like flying blind.

AWS-native observability tools provide deep insight:

- CloudWatch: Metrics, alarms, cost visibility

- X-Ray: End-to-end transaction tracing

- Distro for OpenTelemetry: Unified logs, traces, metrics

Together, they enable:

- Early detection of query bottlenecks

- Faster root-cause analysis

- Predictable performance tuning

- Proactive cost optimisation

In Ascertain deployments, observability is designed by aligning directly with the AWS Well-Architected Framework’s operational excellence pillar.

How Ascertain Approaches Database Optimisation for BFSI

Rather than treating databases as isolated components, Ascertain approaches optimisation as a business-critical capability.

Across BFSI engagements, Ascertain has supported:

- Payment platforms requiring strong transactional consistency

- Data integration layers using DataFuze

- AWS-based modernisation for regulated environments

- Real-time reconciliation and reporting systems

- Architectures designed for availability, auditability, and scale

This ensures database decisions align not just with performance targets, but with regulatory expectations, operational resilience, and future growth.

Conclusion

In BFSI, database optimisation directly impacts:

- Customer experience

- Fraud exposure

- Regulatory compliance

- Operating margins

- Speed of innovation

RDS, Aurora, caching layers, multi-region replication, and deep observability when applied intentionally turn cloud databases into a competitive advantage rather than a cost centre.

The institutions that succeed are those that optimise deliberately, not reactively.